Not sure if this question is appropriate here, but I blow at Math and was hoping somebody could help me understand how the professor got Theta(log2n) from T(n) = Theta(1) +…Theta(1). I’m having trouble remembering how to convert logarithms and I can’t figure this simple one out. Here’s the link at the exact problem is at 34:35, thanks! https://ocw.mit.edu/courses/electrical-engineering-and-computer-science/6-006-introduction-to-algorithms-fall-2011/lecture-videos/lecture-1-algorithmic-thinking-peak-finding/

Think of logarithms as the inverse of exponentiation. When we take a number x to some power y, we’re asking for a number that is x * x * x … y times. When we take the log base y of some number x, we’re asking how many times we can divide the number x by y until we reach 1.

For example:

56 = 5 * 5 * 5 * 5 * 5 * 5 = 15,625

log515,625 = 15625 / 5 / 5 / 5 / 5… = 6

Put that aside and let’s think about the algorithm being discussed in the video. It’s recursive, and at each call we’re cutting an array in half and performing some operation on it. In this case, we don’t care what that operation is, but we assume it’s done in constant time (no matter how big the array is, it takes the same amount of time), so we say this runs in Θ(1). The big idea to take away here is that the worst case running time of the algorithm will be number of times we can divide the array by 2 times the running time of our base case. Since we know the running time of our base case, the question is, how do we calculate the number of times we can divide an array by two?

An array with length 8 can be divided in half 3 times because 8 / 2 / 2 / 2 = 1.

An array with length 32 can be divided in half 5 times because 32 / 2 / 2 / 2 / 2 / 2 = 1.

For numbers that are not evenly divisible by 2, a logarithm will give us a non-integer value. For the purpose of algorithmic analysis, we can round up to the nearest integer value. Remember that we can’t have arrays of non-integer size.

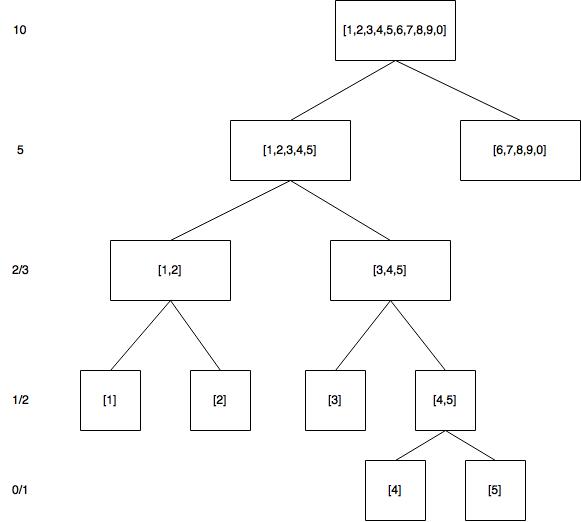

An array with length 10 can be divided in half 4 times. This is easiest to understand with an actual array, so here’s an ugly diagram I just made:

So, log210 ~= 3.322, which we round up to 4.

To generalize, for an array of size N, we can divide it by two log2N times, rounded up to the nearest whole number. What the professor is concluding is that the divide and conquer algorithm he has described will take no more than log2N * Θ(1), but at least Θ(1) operations.

That was so well articulated, thank you so much. So in this recursive algorithm, Θ(1) would be the “outer shell” and log2N * Θ(1) would be the last one that can’t break open in the Russian Doll metaphor? And the program running through this Divide and Conquer algorithm will perform at least at Θ(1)'s speed but can go as fast as (but no faster) log2N * Θ(1)'s speed?

I’m not used to thinking of recursion in terms of Russian dolls, but your description doesn’t quite seem right to me. Maybe I’m not understanding it correctly and someone else will give you a better answer.

The algorithm that’s being described in the video does two things: 1) it recursively splits the array, and 2) it returns the highest value it has. (1) technically does take constant time, but we don’t need to account for that in our calculations. It happens log2N times for the reason you’ve just learned. (2) is the base case, which I think is why you’ve described it as the inner most matryoshka doll, but it doesn’t only happen in the base case. The function will compare two numbers and return the highest. It will do this at each “level”, so log2N times. This is a bit abstract and may be difficult to understand at first, so I’ll write this out in JavaScript real quick:

I didn’t actually watch the full video, so I may not have this exactly right, but I think I got the gist of it. Notice that it’s comparing left to right every single time we split an array. That’s a constant time operation - the time it takes to compare two values does not change with the total number of values - but it happens once every time we split an array. So don’t think of the Θ(1) operation as just happening at the “end”.

I hope that helps more than it confuses. This stuff can take a while to really sink in, so take your time with it.

Right idea, but flip that around. The algorithm will take at least 1 “operation”, but will never do more than log2N.