So I have made this short bitcoin simulation game when you roll a 10000-faced die and get some fake bitcoin corresponding to the dice number.

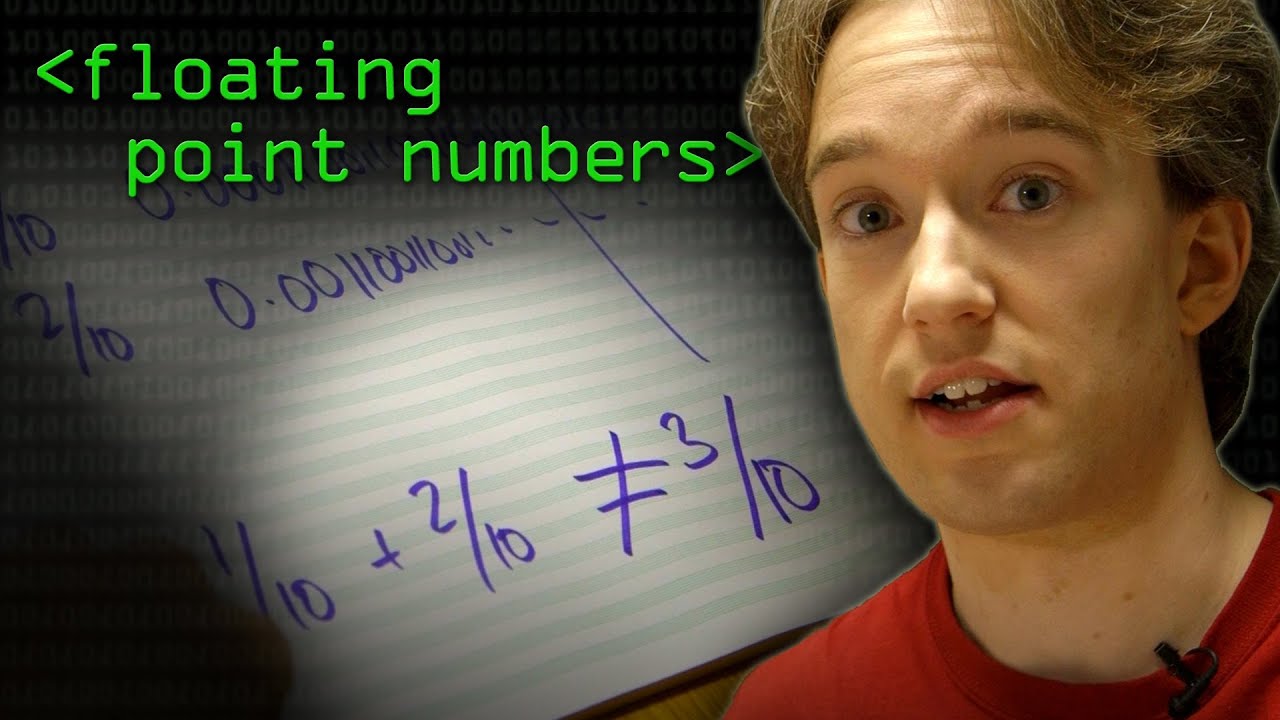

But when I rolled a few times it added 0000000000000# at the end of the number.

my code

import random

btc = 0

print(

'''

Copyright 2022 Fappy and Jason TM

Type help to begin.

WARNING THIS GAME IS NOT LEGIT AND IS JUST USED FOR FUN.

'''

)

game_is_running = True

while game_is_running:

cmd = input(">>> ").upper()

if cmd == "HELP":

print(

'''

Type roll to roll the wheel

you can roll evrey 1 minute

Type risk to play the multiplyer game.

'''

)

elif cmd == "ROLL":

num = random.randint(0,10000)

if num <= 3000:

btc += 0.001

elif num >= 3001:

btc += 0.1

elif num == 10000:

btc += 1

print("You rolled ", str(num), " you have ", str(btc))